A (potential) coaching system for climbing

TL;DR

Last night at Mosaic, I was chatting with Tim Kang after he coached me through a movement I had been struggling with. His interpretation was very analytical and clear about the exact issue he noticed. Once he explained that relationship, I was able to practice the move differently and complete the section I'd been stuck on.

Afterward, I showed him some of the video analysis features I have been building for Spray, along with a few ideas around AI-assisted climbing analysis. One of the longer-term ideas is a virtual climber: an agent that represents a climber’s physical attributes and tries to move through a reconstructed 3D problem. I wrote briefly about that direction before in my working log on bouldering and computer vision.

What made the conversation surprising was that Tim had been approaching a similar question from the coaching side: given a climbing video, what should we look at in order to say something actually useful?

He also mentioned that, when coaching clients, he often finds himself returning to similar types of feedback. That does not make coaching simple or deterministic, but it suggests that some parts of expert judgment may have recurring structure. A coach sees body position, timing, tension, sequence, and failure points together. The hard question is whether software can learn to represent enough of that context to become useful.

Framing the Problem Space

That convergence is what this post is about. I want to distill the notes, references, and experiments I’ve been collecting, while reflecting on how Tim’s coaching perspective led me to rethink the direction.

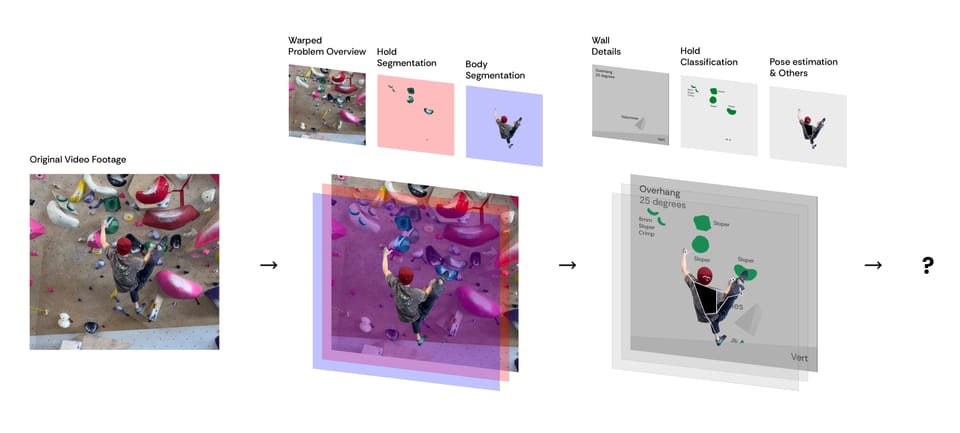

The central problem, as I see it, is how to represent and reason about climbing movement well enough to produce coaching that is both technically grounded and actionable. That breaks into a set of smaller questions: what the system needs to know about the climber and the problem, what it can reliably extract from video, how it should evaluate movement, and how those observations can be turned into useful feedback.

Tim approaches the problem from the coaching side: what does good movement look like, and why do certain mistakes repeat across climbers? I tried to approach it from the system side: what information would software need in order to observe, compare, and eventually reason about those movements?

The layers I currently have in mind are:

- Problem & climber context

- Video measurement

- Movement interpretation

- Coaching feedback

- (Maybe) simulation

Q1: What does the system need to know?

A climbing video is an incomplete representation of a climb. Most videos we see on Instagram, Kaya, or other platforms are captured in 2D image space. From that alone, it is difficult to know how hard the problem is, what the wall angle is, how positive or friction-dependent a hold is, or whether a movement is realistic for a specific climber.

A coach fills in those gaps through experience. In machine learning terms, an experienced coach has years of “pre-trained weights” from climbing, watching, failing, adjusting, and teaching. They are reading the wall, the holds, the body type, the intended movement, and the failure point together.

For a system to do something similar, it needs context from both the problem and the climber.

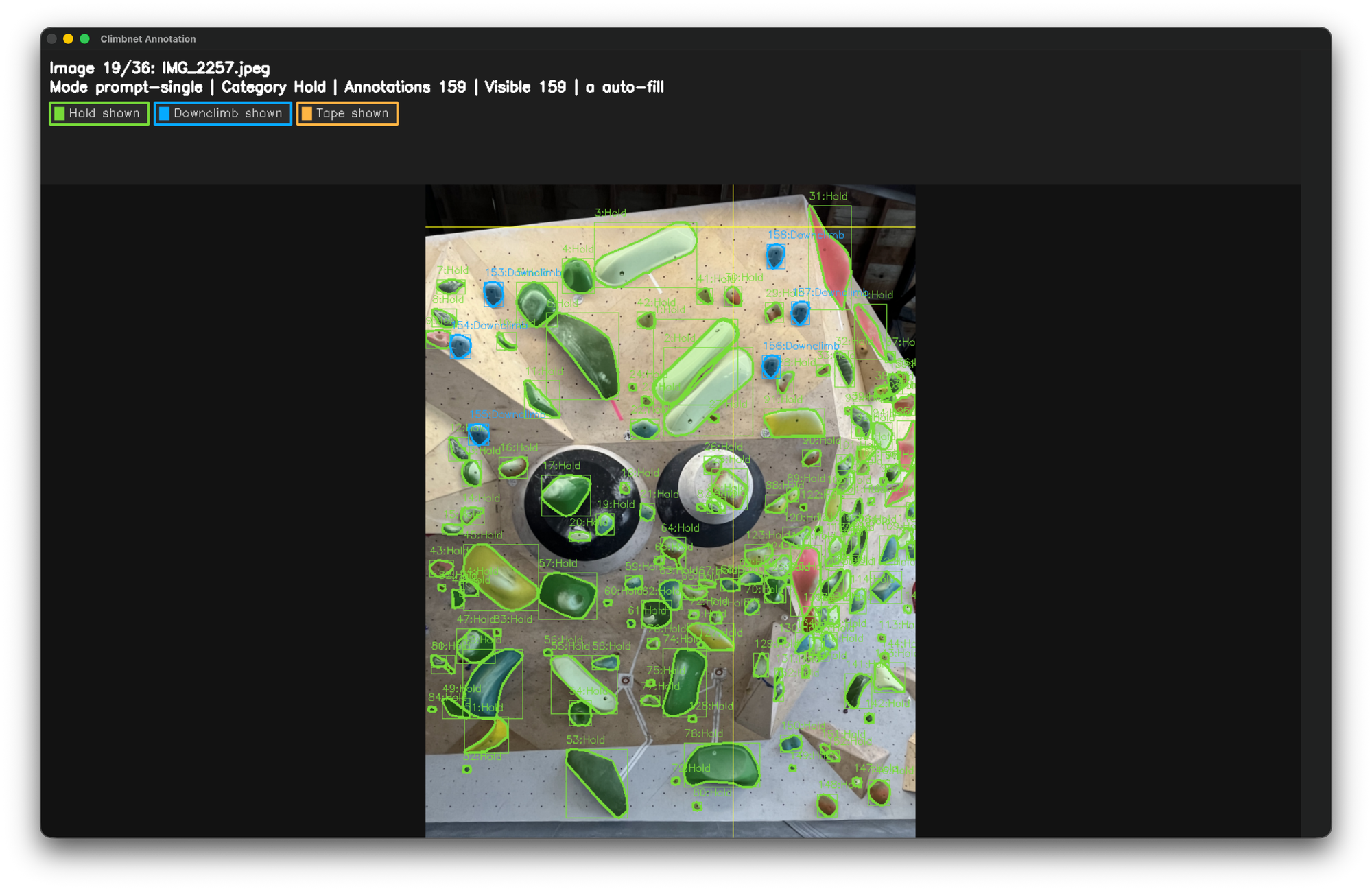

On the problem side, this could include hold positions, shape, texture, wall angle, distance between holds, and the role of each hold in the climb. A sloper on slab, a sloper on vertical terrain, and a sloper on a steep overhang may be visually similar, but they create very different movement requirements.

On the climber side, the system needs to understand that movement is body-dependent. Height, reach, flexibility, mobility, strength, and injury constraints all affect which beta is realistic. A move that looks straightforward for one climber may be reach-limited for another. A boxed position may be comfortable for one body type and impossible for another. Even psychological factors, such as hesitation before committing to a dynamic movement, can affect the outcome, although those may be harder to model at the beginning.

Q2: What can video tell us, and what does it mean?

Once we have context about the climber and the problem, the next question is what video can actually measure.

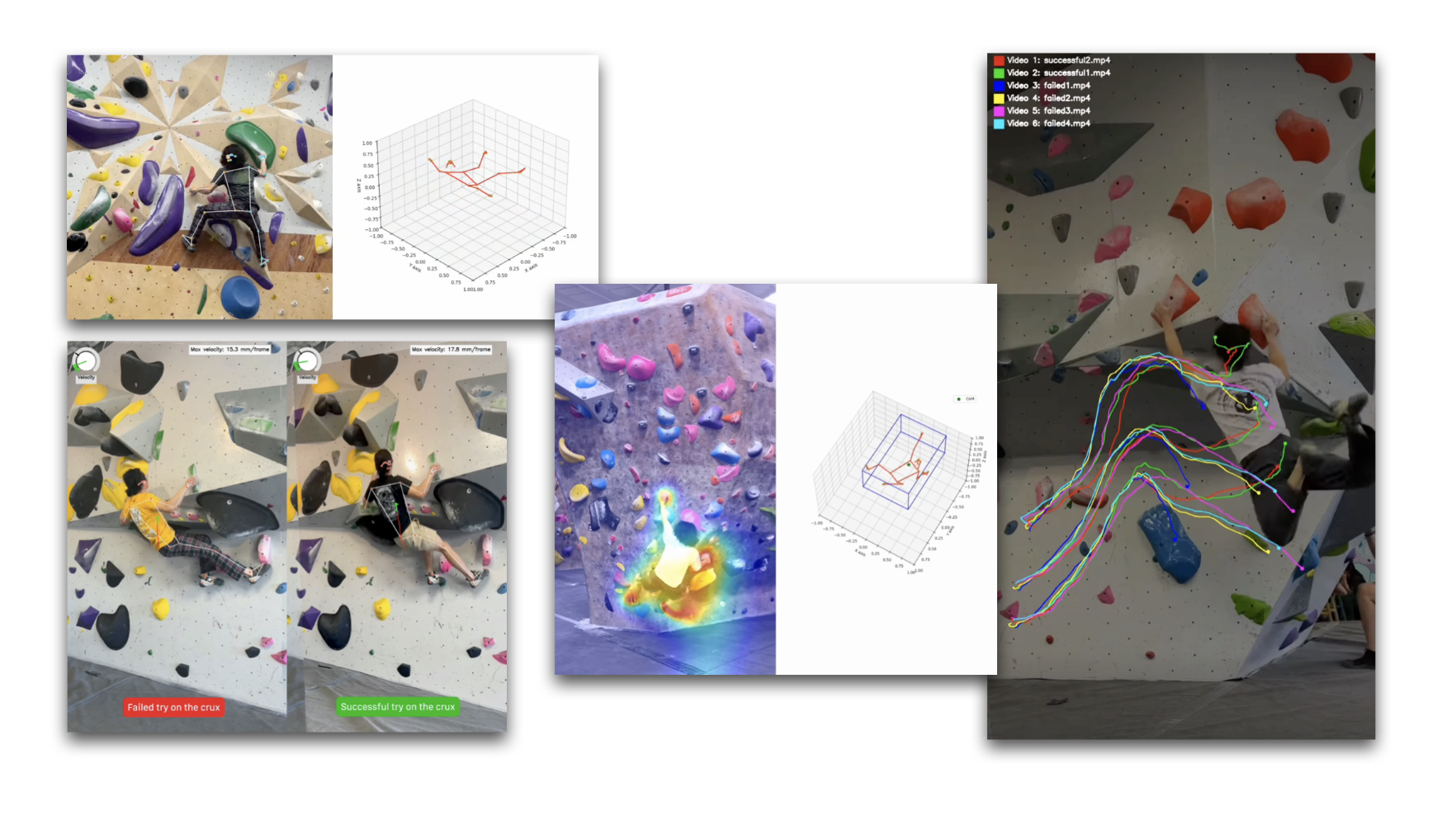

This is where pose estimation and trajectory tracking become useful. State-of-the-art pose estimation models, such as MediaPipe and ViTPose, can already detect body landmarks—even under occlusion—and smoothing techniques such as SmoothNet can reduce the frame-to-frame jitter that often appears in raw pose estimation. I have also been experimenting with this in my own climbing analysis toolbox, using pose overlays, joint trajectories, and velocity heatmaps to visualize attempts.

Even simple tools can be surprisingly useful. I often use QuickTime because the MacBook trackpad makes it easy to scrub through frames and inspect the nuance of a move. The interaction is primitive, but effective: slow down the attempt, isolate the key frames, and ask what changed.

Some of my previous attempts to provide enough context to understand the problem and the movement. They were meant to help me document my climbs and also provide detailed beta to others.

A more useful way to think about this layer is not simply as “pose estimation,” but as a set of movement measurements. From video, the system may be able to extract metrics such as:

- Hip path: how the hips travel through a move, especially whether the climber shifts weight before reaching.

- Timing: when the climber initiates a hand movement relative to foot placement, hip movement, or body tension.

- Contact stability: how long the hands and feet stay on holds, when contacts break, and whether foot cuts or hand slips happen repeatedly.

- Sequence: the order of hand and foot movements, and whether a failed attempt differs from a successful one.

- Velocity and acceleration: whether the climber moves with control, hesitation, or a sudden uncontrolled swing.

- Body shape: joint angles, torso orientation, arm bend, knee position, and whether the climber is extended, compressed, twisted, or boxed in.

- Attempt consistency: whether the climber repeats the same movement pattern across attempts, or whether each attempt breaks down in a different way.

These metrics are not yet coaching conclusions. They still tell us what happened, but not necessarily what it means.

This is where coaching judgment enters: interpreting why a movement helped or failed. A foot cut, for example, is not just an event where the foot leaves the wall. It may be the visible result of the hip staying too far from the supporting foot, the climber pulling too early with the arms, the hand sequence forcing a bad body position, or simply a hold that is too poor to maintain tension from that angle.

Good coaching depends on relationships between signals: hip position, timing, tension, sequence, and the specific failure point.

I do not yet have a complete rubric, but a few candidate dimensions seem worth testing:

- $H(t)$ Hip trajectory: especially whether the hip moves toward the supporting foot before the reach.

- $C_i(t)$ Contact timing: especially whether the foot stays engaged until the target hand contact is made.

- $N_{\text{adjustments}}$ Foot re-adjustments, or how many times the climber resets a foot before committing to the move.

- Arm initiation: changes in $\theta_{\text{elbow}}(t)$, especially whether the elbow bends before the hips or lower body begin to generate movement.

- Loss of tension: a transition where $C_{\text{foot}}(t)$ changes from contact to no contact, followed by a spike in hip velocity $\|dH/dt\|$.

These mappings are hypotheses. The same signal can mean different things depending on the problem, the climber, and the intended beta. But they could give us a starting point for turning raw video measurements into interpretable movement dimensions.

There are already public experiments in this direction, such as @oreshihon.atsushi.iida’s radar chart and scoring system based on pose analysis. I still have questions about how those metrics are defined, how precise they are, and whether they generalize across climbs, but the direction is compelling: can movement quality be represented through interpretable dimensions instead of raw pose data?

This is also where Tim’s coaching experience feels especially relevant. If he repeatedly returns to certain types of feedback across different climbers and problems, those patterns may reveal the structure this layer needs. The goal would not be to replace expert judgment, but to understand it well enough that a system can support it.

So the research question here becomes:

Which movement signals can be reliably extracted from climbing video, and which of those signals are stable enough to become useful movement metrics with grading rubrics?

Some signals feel realistic in the near term: joint trajectories, timing differences between attempts, rough hip movement, hand and foot movement, contact estimation, and sequence comparison. Other signals are much harder: true force through each limb, precise distance from the wall, exact center of mass in 3D, hold friction, and body tension. Those may require richer reconstruction, granular annotation, or physical modeling.

Q3: How does evaluation become useful feedback?

I think actionable feedback does three things: (1) identifies the issue, (2) explains why it matters, and (3) gives the climber a concrete adjustment. Ideally, the system should also point to the relevant moment in the video, so the climber can connect the explanation to what they actually did.

The evaluation layer should maybe ask a more climbing-specific set of questions:

- What changed between attempts? Did the successful attempt shift the hip earlier? Did the climber move the foot before reaching? Did they maintain contact longer? Did they initiate the hand movement only after creating enough lower-body tension?

- Did the change correlate with success or failure? For example, did the climber only succeed when the right foot stayed engaged until the next hand made contact? Did every failed attempt involve the same early foot cut? Did the climber hesitate before committing to the move?

- What should the climber try next? The final output should be a specific adjustment, not just a score. “Move faster” can be vague. “Place the left foot first, shift your hip over it, then reach with your right hand” is much more useful.

Comparison feels like one of the most practical near-term directions. By comparing two attempts, or an attempt against a beta video, the tool can start with a simpler question: what changed, and which differences seemed to matter?

For example, it might compare hip timing, hand and foot contact, foot cuts, arm initiation, center-of-mass direction, and pauses or readjustments before the crux.

These comparisons could become the basis for structured feedback. Instead of saying, “your attempt was worse than the beta,” the system should say:

Observation (cause and result): Compared with the successful attempt, your right hand started moving before your hips shifted over the left foot. That made the reach happen from a stretched position, so your left foot cut as soon as you pulled.

Suggestion: Try moving the hip first, then reaching.

For Spray, this feels like a realistic product path: start by helping climbers see movement more clearly through pose overlays, trajectories, frame-by-frame comparison, and structured observations. From there, the system can move toward evaluation and coaching.

The question becomes:

How can movement metrics be evaluated in context so they produce feedback specific enough to change the climber’s next attempt?

I think the answer requires computer vision, expert annotation, and an intuitive user interface.

Q4: Could this eventually become a virtual climber?

The final layer is simulation. This is the most ambitious direction, and probably not the right starting point, but it is useful as a long-term research horizon.

The reinforcement learning version of the question is:

Given a climber’s body and a 3D problem, what movement strategy could solve this climb?

This is different from the coaching question:

Given a video of a climber attempting a problem, what are they doing well or poorly, and what should they change?

A simulation system would require a virtual climber, a reconstructed problem, and a model of physical constraints: wall geometry, hold properties, body dimensions, contact points, gravity, momentum, and failure conditions. In theory, a reinforcement learning environment could allow the virtual climber to try many possible movement strategies and discover one or more feasible betas.

There are adjacent projects that make this direction feel conceptually possible, even if climbing itself is much more complex. Researchers at Stanford have explored musculoskeletal modeling through tools like OpenSim/OpenCap, which can estimate how muscles are used during human movement. On the experimental side, videos from creators like AI Warehouse show virtual agents learning to solve simple movement tasks, and environments such as OpenAI Gym provide a framework for training agents through rewards and penalties.

Climbing, however, is much harder than many toy control problems. The contact surface is irregular. Holds vary in texture, shape, and orientation. Friction depends on force, skin, chalk, sweat, and contact angle. The same move may require different beta depending on the climber’s body. We would need to define the granularity of each of these attributes in order to iteratively derive a workable RL setup.

There is also rarely a single optimal solution; many problems support multiple valid movement strategies. And that is part of what makes climbing interesting.

Right now, the more realistic path is to build the lower layers first:

- pose and trajectory analysis

- movement metrics

- expert-labeled evaluation

- coaching feedback

- structured movement dataset

- simulation or reinforcement learning later

In this framing, coaching analysis may eventually become the foundation for simulation. If we can understand how expert coaches evaluate movement, those evaluations could help define rewards, penalties, constraints, or priors for a virtual climber agent.

Writing this down clarified the shape of the problem for me, and I’m really glad we had that conversation. What I am most curious about now is where Tim would draw the lines differently: which metrics he thinks matter most, and what a system would need to catch before he would actually trust it as a coaching tool.

Tim has also shared many tips and insights through his episodes on Testpiece. I think there may be something interesting to explore there too: if those conversations were transcribed, we could study which movement concepts, training themes, and coaching patterns come up most often.

I remember Tim shared something along the lines of: many great climbers climb by instinct, and they may not always be able to identify exactly what makes them succeed on certain moves. That idea really stuck with me. Hopefully, we can eventually build a system that helps translate the experience of great climbers and coaches into tailored coaching for everyone.